Noushad SojibI train robots to recover from mistakes using imperfect human demonstrations. Robotics ML Engineer building data-efficient manipulation policies by fine-tuning VLA models (π0.5, OpenVLA, GR00T) on real Franka robots and extracting signal from noisy teleoperation data. M.Sc., University of New Hampshire (CARL). Built humanoid robots from scratch and founded RoboSUST at SUST. Open to robotics ML / robot learning roles — best reached by email. Resume / Email / GitHub / Google Scholar / LinkedIn |

|

Robot Learning |

|

|

Vision–Language–Action ModelsIntroduced a modified VLA loss function to better leverage imperfect demonstrations and enable recovery from errors, improving robustness in real-world manipulation. Built scalable data pipelines to convert custom datasets into the LeRobot format and fine-tuned π0.5, OpenVLA, and GR00T N1.5 on Franka robot tasks. VLA getting-started / BC tutorial |

|

Diffusion Policy from ScratchImplemented DDPM-based and image-conditioned diffusion policies from first principles. Evaluated on Push-T and RoboMimic manipulation benchmarks. GitHub |

|

Learning from Non-Expert Human DemonstrationsCollected 1,000+ demonstrations from 30+ lay users using SpaceMouse in simulation and on real Franka and Sawyer robots. Developed a frequency-domain (FFT-based) method to detect and remove low-quality segments (~300× faster than prior work). Evaluated BC-RNN, BC-Transformer, and Diffusion Policy on Franka and Sawyer robots. Manuscript under review. Publications: |

Robot From ScratchLimited access to robotic platforms led me to build robots from scratch and found RoboSUST, where I led the design and deployment of low-cost robotic systems. |

|

Ribo — 24 DOF Humanoid RobotDesigned and built a full humanoid robot capable of upper-body manipulation and human-interactive behaviors. Role: Team Lead — hardware, software, and interaction interface Publication at IEEE R10-HTC 2017 / Key Contributions: Led hardware and software development of a 24 DOF humanoid platform. Implemented control for coordinated arm and hand motion. Designed user-facing interaction interface. |

|

Lee: A biped walking robotBuilt a biped robot focused on achieving stable walking with minimal hardware cost. Role: Team Lead — mechanical design, gait control, and software Key Contributions: Designed mechanical structure for balance and locomotion. Implemented basic gait generation and control. Optimized for low-cost components. |

|

KiddoInteractive educational robot designed to engage children through programmable behaviors—built and validated in both simulation and physical hardware. Role: Solo Designer & Developer Publication at IEEE IRCE 2020 / |

Hardware DesignExperience building robots from scratch led me to design embedded systems that support reliable deployment, with multiple systems published and used on research platforms. |

|

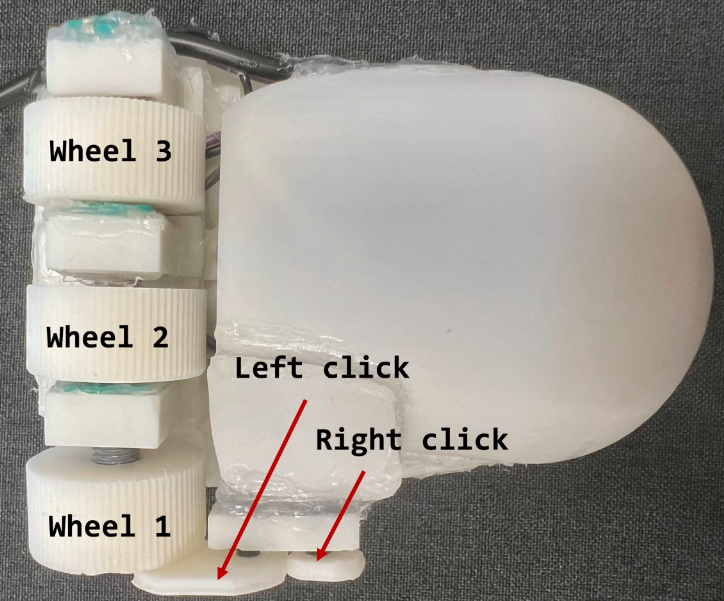

3Wheel MouseThree-wheeled input device that enables efficient, versatile non-visual computer interaction for blind users. Role: Designer & Prototype Builder — published at ACM UIST 2024 Islam, Md Touhidul, et al. “Wheeler: A three-wheeled input device for usable, efficient, and versatile non-visual interaction.” ACM UIST 2024. Paper & Video |

|

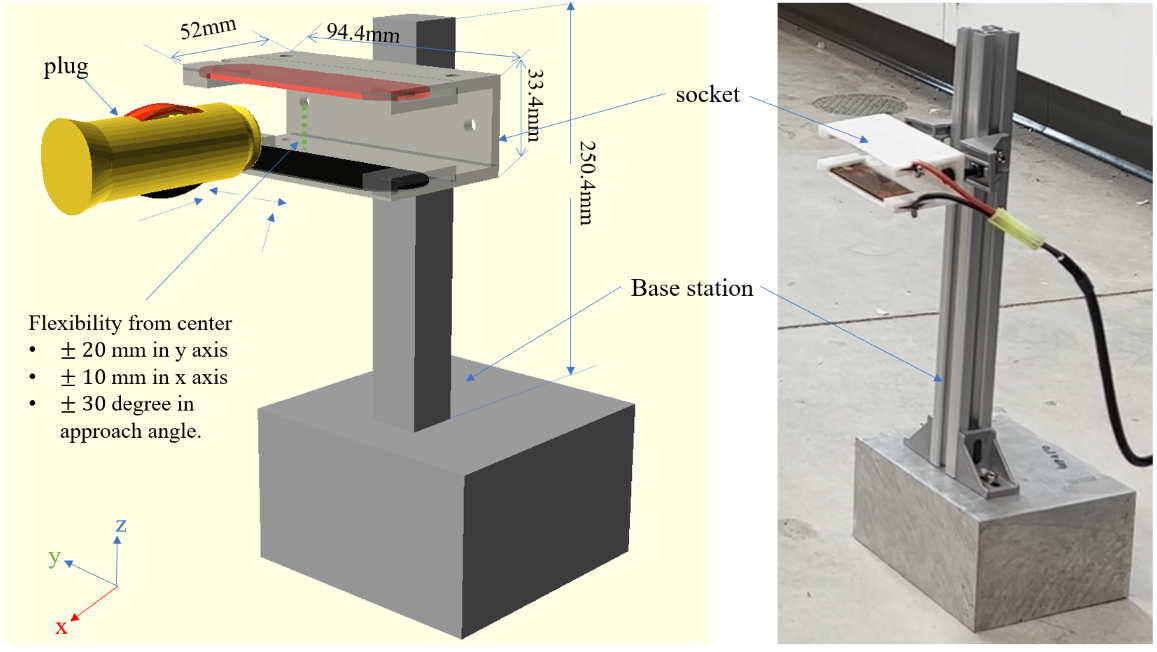

Charging DockRobust, low-cost autonomous charging dock for mobile robots—enabling continuous operation without human intervention. Role: Designer & Prototype Builder — deployed on Hello Stretch and Jackal robot code / website / |

|

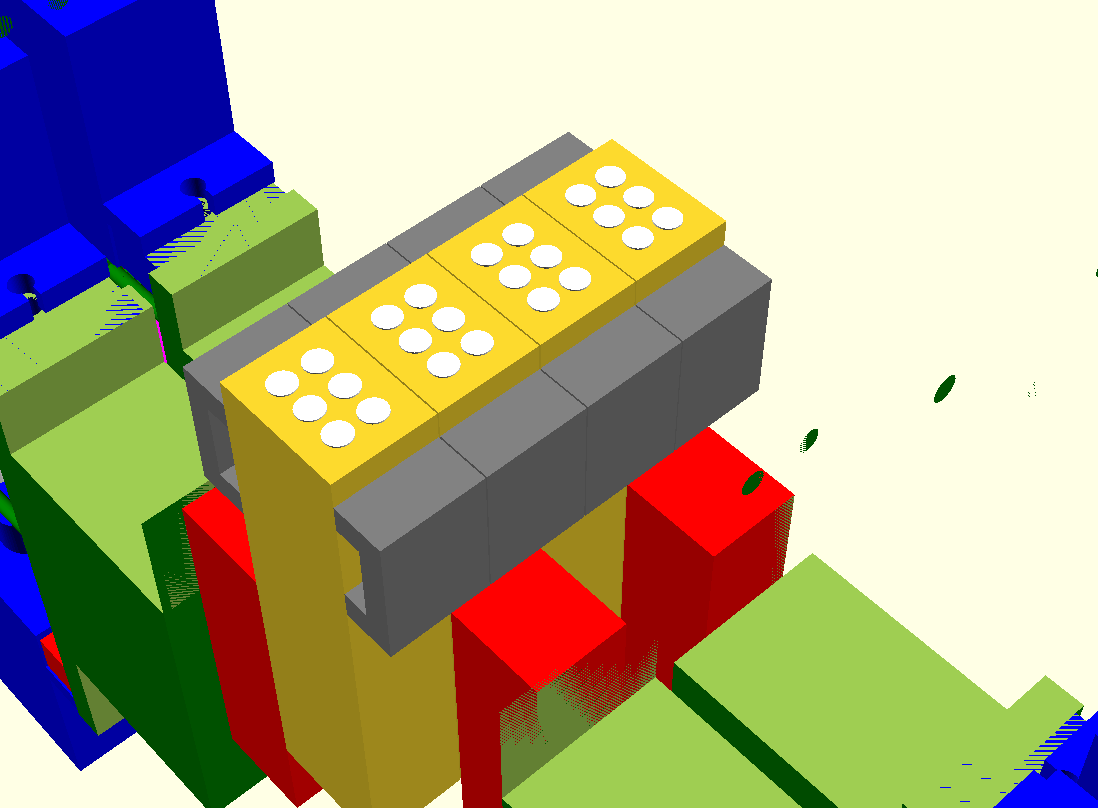

Lowcost Braille DisplayLow-cost single-cell Braille display that makes digital Bangla text accessible to visually impaired readers. Role: Designer & Prototype Builder — published at IEEE ICBSLP 2018 Sojib, Noushad, and M. Zafar Iqbal. “Single cell bangla braille book reader for visually impaired people.” IEEE ICBSLP 2018. Paper |

|

Design and source code from Jon Barron's website |